Impact of regulation on children’s digital lives

Research published today by the joint 5Rights-LSE Digital Futures for Children centre into changes made by 50 companies reveals that UK and EU laws for children’s safety and privacy are leading to significant changes to tech services, governance, moderation strategies, information and tools as well as default settings. The most impactful include social media accounts defaulted to private settings, changes to recommender systems and restrictions on targeted advertising to children.

The report Impact of regulation on children’s digital lives examines the impacts on services for UK users in particular of the UK’s Age Appropriate Design Code (AADC) and Online Safety Act (OSA) as well as the EU’s Digital Services Act (DSA). It looks at changes publicly announced by companies over the period 2017–24 as well as material directly provided by the 8 companies who answered a request for information sent to 50 businesses.

The research recorded a total of 128 changes announced by Meta, Google, TikTok and Snap over the period – either in anticipation of legislation or regulation, or following its introduction – with a peak of 42 changes in 2021, the year the AADC came into effect.

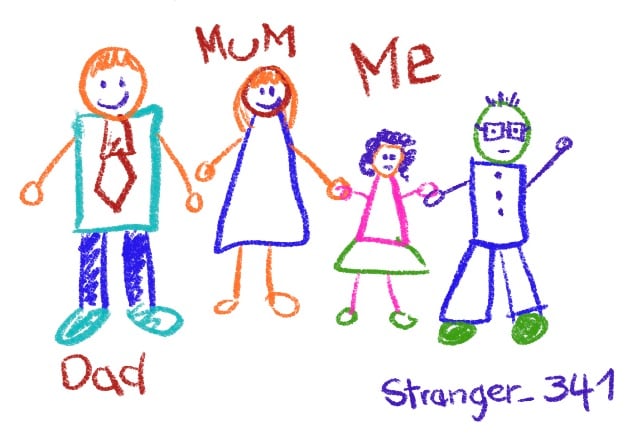

As the report shows, the most impactful changes are those that are by design and default – changes to product design that take responsibility for aligning digital services with children’s rights and development needs. The research revealed however that companies are significantly relying on tools such as parental controls in response to legislation and regulation. As the report notes:

“While there is a valid relationship between the use of tools and the requirements in the AADC, GDPR and DSA, there is a risk of over-reliance as a privacy and safety measure. The evidence indicates low levels of use and efficacy for parental controls, plus risks to child autonomy. This therefore presents a risk of reliance to the exclusion of other measures.”

Drafted by the former UK Deputy Information Commissioner Steve Woods, the report highlights how “the UK and EU approach to legislation and regulation seeks to ensure companies’ systems and processes embed safety by design and duties of care, to realise the rights of children in relation to the digital environment, so they can learn, explore and play online safely” and that, with the AADC, DSA and OSA passed, “this is the optimal moment to set out a baseline for monitoring the impact” for children online.

A broad lack of transparency however hinders this monitoring. Not all changes are publicly announced, and, of the 50 companies Steve Woods wrote to for evidence, only 8 answered, and then only partially.

The report sets out 11 recommendations to companies and regulators:

- Data protection and online safety regulators should work via international cooperation mechanisms, such as the Global Online Safety Regulators Network and Global Privacy Assembly, to agree best practice across jurisdictions with the aim of creating global norms.

- Companies subject to the DSA, OSA and AADC should ensure that solutions address the full range of risks, as detailed in the OECD typology of risks, including support measures related to conduct and contact risks.

- Companies should work across industry to introduce best practice rather than each working separately, to ensure that different solutions don’t leave unnecessary gaps in safety provision.

- The UK Government should update the OSA to introduce mandatory access to data for child safety research, learning from the DSA’s approach and implementation by the European Commission.

- The European Commission and Ofcom should explore how data related to child safety changes could be recorded and logged transparently in a ‘child online safety tracking database’.

- The UK Government, Ofcom, Information Commissioner’s Office (ICO) and European Commission should consult on how to assess the outcomes of their child safety regimes, including consideration of children’s wider rights under the United Nations (UN) Convention on the Rights of the Child.

- The ICO, Ofcom and European Commission should provide guidance as to how platforms should record and document changes to the design and governance of their platforms related to child privacy and safety.

- Companies should provide a single web portal that allows researchers and other stakeholders to see a record of child privacy and safety changes implemented, by date. The changes should also be made available as an API and in machine-readable format. This should initially be developed as regulatory guidance and made into a statutory requirement if evidence indicates formal provision is needed.

- Companies should provide explicit confirmation of which jurisdiction or region each change applies to, and update this information as it changes.

- All EU Data Protection Authorities and the ICO should ensure that they assess the risks related to children’s online privacy when developing their regulatory strategies, including measures to assess the outcomes achieved. All Data Protection Authorities should also include a section on children in their annual reports, including outcomes of investigations that did not result in formal action.

- Data protection and online safety regulators should publish their expectations of good practice, require companies to meet or better them, and seek to spread good practice across sectors.

If the rights of children are to be respected in the digital environment, companies will need to embed safety by design and duties of care principles – through regulation, we can get there.

Read the full report to learn more about the specific changes and recommendations.

You can view phase 2 (2024-2026) here.